Goldman Sachs Automated E Bay Site Crawler Aggregator

Site Crawler Aggregator

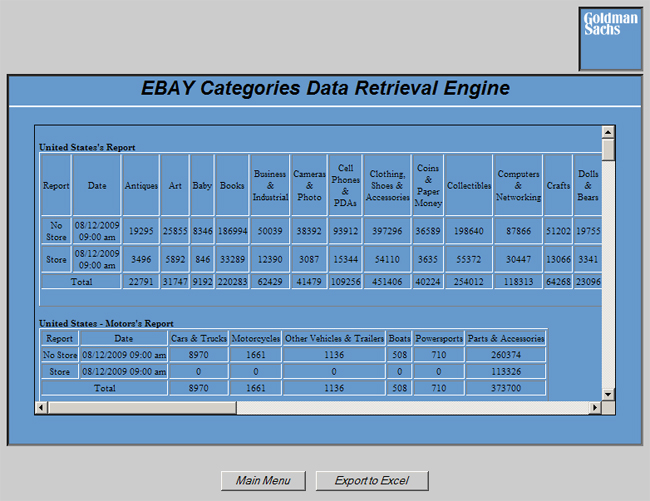

Goldman Sachs needed to have an application built that would automatically “crawl” a pre-defined list of website pages on different E-Bay sites and collect information and place data into an easy-to-read consolidated report for their statistical analysis.

With those needs in mind, MVI consultants designed an application that would accomplish this goal. The application automatically goes out daily and pulls the data from E-Bay’s site into a simple web-based reporting system. Every morning, a scheduled job on MVI’s servers executes a PHP script which goes out and visits all of these pre-defined locations using a special library named CURL. Using a special pattern matching mechanism called regular expressions, the script gathers all of the information required by Goldman Sachs and inserts it into a specially structured MySQL database.

From there, the results are then made available via an online PHP-based web application which allows them to log in and quickly and easily retrieve and review all data that was collected. This application has helped Goldman Sachs become more efficient and has given them the ability to focus their efforts on more productive tasks. Through the creation implementation of projects like these MVI has become very adept at developing web automation tools.